The Koyeb Serverless Engine: from Kubernetes to Nomad, Firecracker, and Kuma

At Koyeb, our mission is to provide the fastest way to deploy applications globally. We are building a platform allowing developers and businesses to easily run applications, a platform where you don't need to think and deal with the resiliency and scalability of your servers: a serverless platform.

Ironically, a serverless platform is actually full of servers. As a cloud service provider, we operate the infrastructure for you and abstract it as much as possible.

The initial technological choices you make as a cloud service provider are crucial. Not only do they determine the features you can develop, but also the resiliency and scalability of your entire platform.

We decided to build our own serverless engine, one that would not be limited by existing implementations. The first version of Koyeb was built on top of Kubernetes and allowed us to quickly build a working cloud platform. After a few months of operating with this version, we decided to move user workloads from Kubernetes to a custom stack based on Nomad, Firecracker, and Kuma.

We wrote this blog post to explain why we moved from Kubernetes to a custom stack to power our users' serverless workloads. This post is written from our point of view as a cloud service provider.

- Koyeb's requirements: Fast, Global, Secure, Scalable

- Building on top of the right abstraction layer

- The limits of Kubernetes

- The stack behind Koyeb Serverless Engine: Nomad, Firecracker, Kuma on bare metal... and Kubernetes

Koyeb's requirements: Fast, Global, Secure, Scalable

Before diving into the technicals, let me outline the requirements which led us to where we are today.

At Koyeb, we are building a next-generation serverless platform that should be the fastest way to deploy applications globally. There are four core pillars we want to achieve:

- Fast: we want the platform to be quick, both when you deploy your application and when the application is running. We believe deploying a software update should take minutes or ideally seconds. No developer wants to wait dozens of minutes before an upgrade is deployed, especially when working on development or pre-production environments. Execution should also be fast and your workloads should not be slower in the cloud than on your laptop.

- Global: for businesses, deploying globally is rarely optional in 2021, and it is definitely not an option for us as we have customers all around the world. Whether you're operating a global B2C brand or a B2B company, if you have users all around the world, you need a simple and effective way to operate in multiple regions. Compliance and performance are the two drivers here.

- Secure: as a cloud service provider, security is crucial, especially as we operate a multi-tenant environment. Isolation between customers should be reliable, both at the host and network level. From a user perspective, security should be built-in and should not be an option.

- Scalable: to be competitive on the market, we need to offer a scalable platform for our end-users and we need to build a platform able to support both your growth and our own growth. We've already experienced the pain of struggling with growth in a previous life. To better support our customers all around the world, we want to be able to run on different cloud service providers depending on locations and to respect sovereignty requirements.

Now that we have a list of requirements, let's move to the technical options.

Building on top of the right abstraction layer

The options

We considered all level of abstractions to build a full-featured platform which would be able to support our mission and requirements. We had roughly 4 options, below is the list sorted from the highest level of abstraction to the one where you deal directly with hardware:

- Cloud providers primitives: Building on top high-level function and container primitives of cloud providers

- Kubernetes: Using Kubernetes to power our user workloads

- VMs or BareMetal: Building a purpose-built solution, on top of Virtual Machines or BareMetal servers

- Racking: Operating our own physical infrastructure and network (aka racking BareMetal machines)

Our own users face the same kind of options to deploy and operate their production applications. Most of them will rule out options 3 and 4 as these are irrelevant for most businesses nowadays. We believe anybody who is not in the infrastructure business should try to build on top of high-level providers like Koyeb, aka option 1.

Our first choice: Kubernetes to the rescue

We quickly ruled out racking servers (option 4). We've been in the business of racking and even building BareMetal servers before but there are now enough options to pick from, at least for our current needs. In 2021, building software usually involves relying on a cloud service provider. We are no exception to that as we are building a cloud service provider on top of other cloud service providers.

We also ruled out building on top of high-level abstractions of cloud service providers (option 1) since it doesn't provide the control we need. This option would probably allow us to develop features faster, but our platform would suffer from the same product limits as these abstractions. Since our goal is to remove these limits to improve our users' deployment experience, this choice would be counter-productive.

With this in mind, we started to build on top of Kubernetes, believing this would be the right abstraction layer to quickly build a reliable platform meeting our requirements. Spoiler alert, after several months we decided to build a purpose-built solution instead...

The limits of Kubernetes

At Koyeb, we have been operating Kubernetes clusters for two years now and, once we started to support custom user functions and containers, we began to see limits.

Here is a broad overview of the limits we started to hit:

- Complexity: Let's start with the most common complaint you might hear: it's complex. In our case, we need to be able to extend the core. Even when operating a cloud platform is your business, understanding how Kubernetes works and why it was implemented this way is hard.

- Security: Kubernetes provides some options to isolate multi-tenant serverless workloads. We first worked with gVisor, but the performances were disapointing. We decided to explore using Firecracker but running Firecracker on Kubernetes was experimental.

- Global and multi-zone deployments: User workloads on Koyeb need to be able to run in multiple zones. Kubernetes doesn't support multi-zone out of the box. Implementing multi-zone with Kubernetes requires deploying a full cluster per zone, with a dedicated control plane for each data center.

- Scalability: Kubernetes is known to have limits in terms of the number of nodes in a cluster, which means that multi-cluster support needs to be implemented really quickly when operating at scale.

- Upgradability: Release cycles are really short, which is great but difficult to manage in production. Having to upgrade every two months, which means testing and validating that the upgrades do not break the production quickly becomes a full-time job.

- Overhead: Kubernetes clusters tend to have considerable overhead. On each hypervisor, Kubelet uses between 10% and 25% of RAM which is a non-negligible cost. Spoiler alert: We're more around 100MB with our new architecture.

Kubernetes: a generalist orchestration platform

Back in the early 2010s, the hype was more around OpenStack than Kubernetes as Docker didn't even exist, but Kubernetes is the same kind of project: an all-purpose orchestration platform.

In our experience as a cloud service provider, when you work with large general-purpose software projects, you tend to hit the same issues:

- once you start scaling or trying to develop advanced features on such a platform, you need to fully understand the internals

- you spend a lot of energy trying to follow upgrades which might not be relevant to you, break your integrations, and you suffer from decisions you have no control over

Don't get me wrong, Kubernetes definitely helped us develop quickly the first generations of the Koyeb engine. The ability to deploy pre-written Helm Charts is a powerful tool to deploy existing software.

As a cloud service provider, we knew we would eventually hit the limits and would either need to deep dive into Kubernetes and contribute inside its core or design the Koyeb platform differently...

The stack behind Koyeb: Nomad, Firecracker, Kuma on bare metal... and Kubernetes

With all these limits in mind, we started to re-design the platform to support our goals. After some testing, we decided to build our serverless platform using a combination of powerful technologies. Enter Nomad, Firecracker, and Kuma on BareMetal... and Kubernetes.

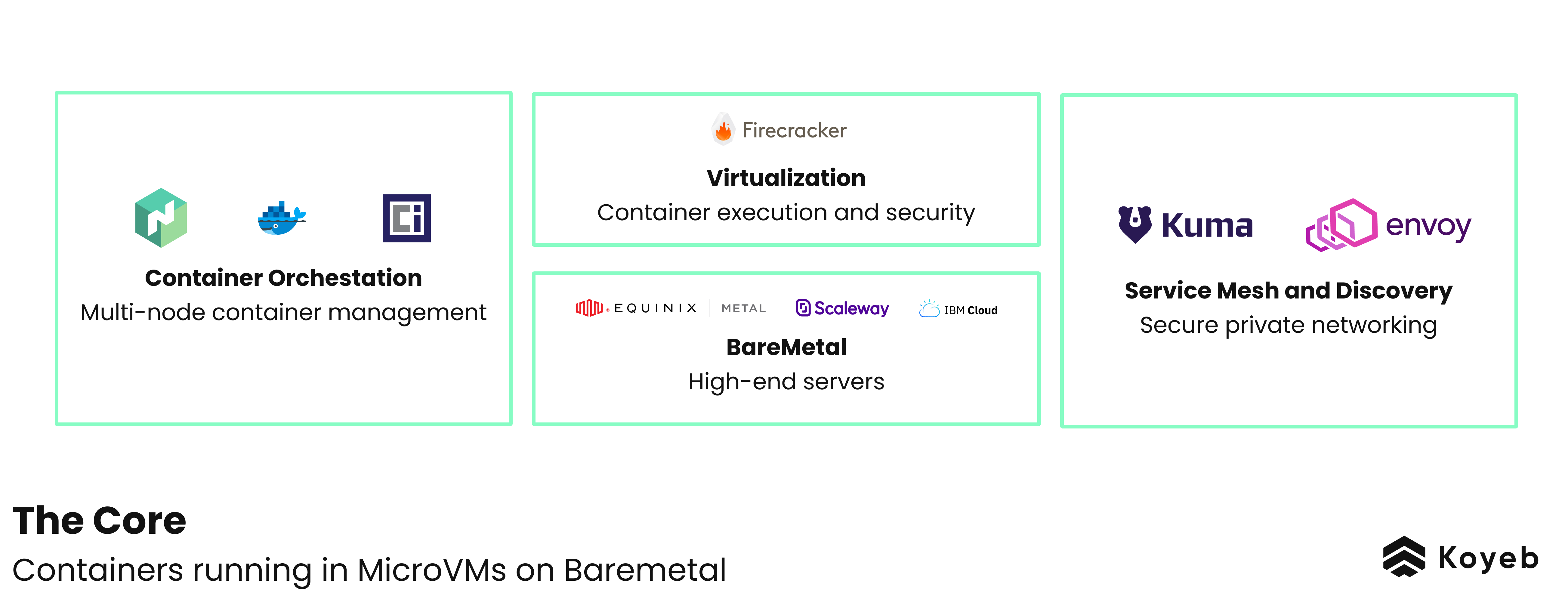

Overall architecture

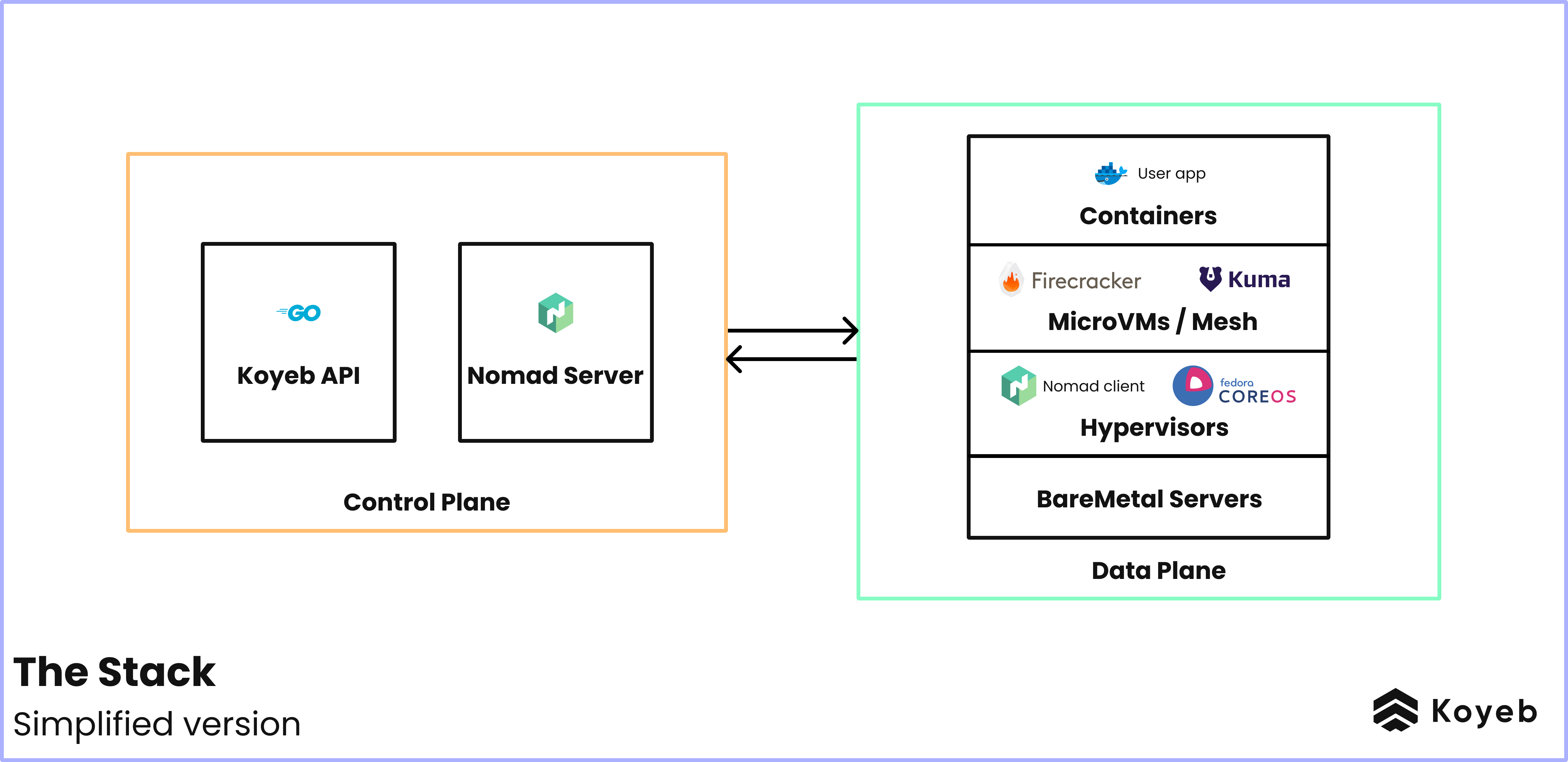

To understand why we combined these technologies, let's take a step back. On the Koyeb platform, there are two key parts:

- the Koyeb Control Plane is composed of the Go APIs behind app.koyeb.com and related databases. It receives the API calls and handles all the logic to effectively deploy the app on the Koyeb Data Plane. It needs to run 24x7x365 with requirements pretty similar to those of users' on Koyeb.

- the Koyeb Data Plane hosts the deployed users' applications. It's the core of the platform, it needs to support running millions of containers across thousands of hypervisors. It's the most demanding part of the platform.

On each hypervisor of the data plane, there are 4 major elements:

- the container runtime and virtualization technology, Firecracker, effectively executing the applications, ensuring multi-tenants workloads are isolated and secured in a performant fashion

- the networking technology, composed of Kuma and Envoy, providing the service mesh and discovery engine which enable seamless private networking between services

- the orchestration engine, Nomad, which performs all provisioning operations when a deployment happens or scaling events happen

- the operating system and servers, CoreOS on High-End BareMetal servers, where the applications run with the lowest possible overhead

And the Control Plane runs on... Kubernetes. As we cannot run the Koyeb Control Plane on Koyeb to avoid circular dependencies, we run the APIs on top of Kubernetes.

Diving into Nomad, Firecracker, and Kuma

Nomad for Container Orchestration

Nomad is a workload orchestration engine which can be used to manage containers and non-containerized applications across clouds. Nomad lets us deploy and manage the containerized applications that are running on the platform. It provisions Firecracker microVMs and communicates with containerd to manage and run the containers.

We selected Nomad to do the orchestration because compared to Kubernetes it is:

- easily extendable. Runtimes can easily be customized and integrating with Firecracker was seamless using a custom nomad driver.

- simpler to use. The scope of the project is way smaller, it doesn't cover everything from runtime to networking and that's just what we needed.

- multi-zone out of the box, simplifying how we make serverless workloads global.

- easy to implement control plane at the region level, reducing the overhead of per-region Kubernetes clusters.

- has a smaller footprint and is easy scalable.

Firecracker for Virtualization

Firecracker is a lightweight virtualization technology that was purpose-built to securely and efficiently run multi-tenant serverless workloads. We needed a virtualization technology that would keep our clients' workloads secure and isolated, and provides fast boot-up times for new instances without compromising performances. That's exactly what Firecracker does.

We've written about Firecracker MicroVMs in a previous blog post if you want to dive deeper.

The key benefits are:

- Multi-tenancy security and isolation,

- High density on bare metal machines thanks to low overhead,

- Fast boot-times for autoscaling microservices and functions

There are a few more benefits that we go over in detail in our post 10 Reasons Why We Love Firecracker MicroVMs.

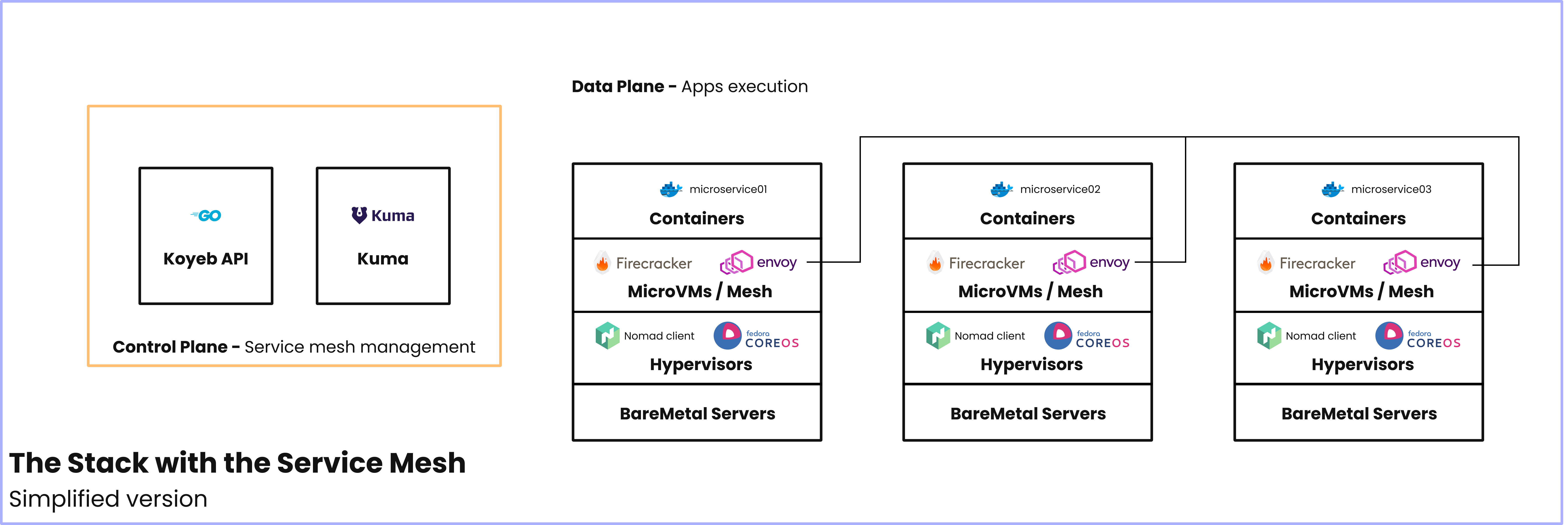

Kuma for Service Mesh Control Plane

Kuma is an open-source service mesh built on top Envoy. It is a control plane that connects distributed services running on Kubernernets and VMs.

We built Koyeb to host distributed and microservice architectures. Distributed architectures need a way to connect the services that run within them. A service mesh is the dedicated infrastructure layer that is responsible for the secure, quick, and reliable communication between services in containerized and microservice applications. For more information about service meshes, check out our post on service meshes and microservices.

Kuma is a rare service mesh that is not tightly coupled to its workload scheduler. This distinguishes it from alternative service meshes like Linkerd and Istio, which only work on Kubernetes. Moreover, Kuma supports multi-zone and cloud-agnostic out of the box.

The Kuma control plane allows us to secure, observe, and manage the microservice network. Next to each service instance on Koyeb is an Envoy Proxy. These proxies form the data plane, which connects to the Kuma control plane.

Proxies are configured from the control plane, meaning the hostnames your instances should know for DNS, their retry policies, and more are all communicated to the proxy from the control plane.

Kuma and Envoy provide us with a great option for private networking, especially because they:

- are platform agnostic,

- support multi-region and multi-cloud deployments,

- can run in a zero-trust network environment thanks to native TLS encryption and mTLS authentication,

- provide natively secure communication between services.

The Koyeb Serverless Platform is Purpose-Built

We built Koyeb to be a secure and powerful serverless platform that is developer-friendly. Providing this serverless experience entails running multi-tenant workloads on bare metal servers in a secure and resource-efficient manner. Moving from Kubernetes to a customized stack of Nomad, Firecracker, and Kuma enables us to provide the serverless experience we envision for the serverless future.

You can use Koyeb to host web apps and services, Docker containers, APIs, event-driven functions, cron jobs, and more. The platform has built-in Docker container deployment and also provides git-driven continuous deployment!

Discover the serverless experience and by signing up today. Also, feel free to join us over on the community platform.

And if you want to help build a serverless cloud service provider and dive deeper into these technologies, we're hiring!