Service Discovery: Solving the Communication Challenge in Microservice Architectures

Whether you're breaking up a monolith or building a green-field application, you may consider using a microservice architecture. Like all app architectures, this model brings opportunities and challenges that a developer must be aware of in order to make the most of this app design.

One such challenge is ensuring communication between your microservices. Since a microservice architecture is composed of loosely-coupled and distributed services, executing the tasks and functions of an app depends on the successful communication between these services.

In this post, we cover service discovery, the vital component in a microservice architecture that enables communication between services. We begin by reviewing DNS to understand how service discovery came to be, then we discuss why service discovery is the best solution to the microservice communication dilemma, and finally, we compare the different service discovery models and their real-world implementations.

Service Discovery with the Internet's Original Phonebook: Domain Name System (DNS)

Created in 1983, DNS remains the protocol that enables client services to find the location of other services on the internet.

While we as humans best understand and remember websites and apps by their names, computers recognize web services by numbers, specifically their IP addresses and network ports.

- IP addresses identify the device or computer that hosts services while the network ports identify where the application or service is running on the computer system.

DNS is the process that transforms human-readable domain names into machine-comprehensible numbers.

Domain Name Types and Examples

| Domain Type | Example |

|---|---|

| Root Domain | . |

| Top-Level Domain | com, net, org |

| Domain Name | google, twitter, koyeb |

| Subdomain | www, mail, store, m |

Locating a service with DNS or cached memory

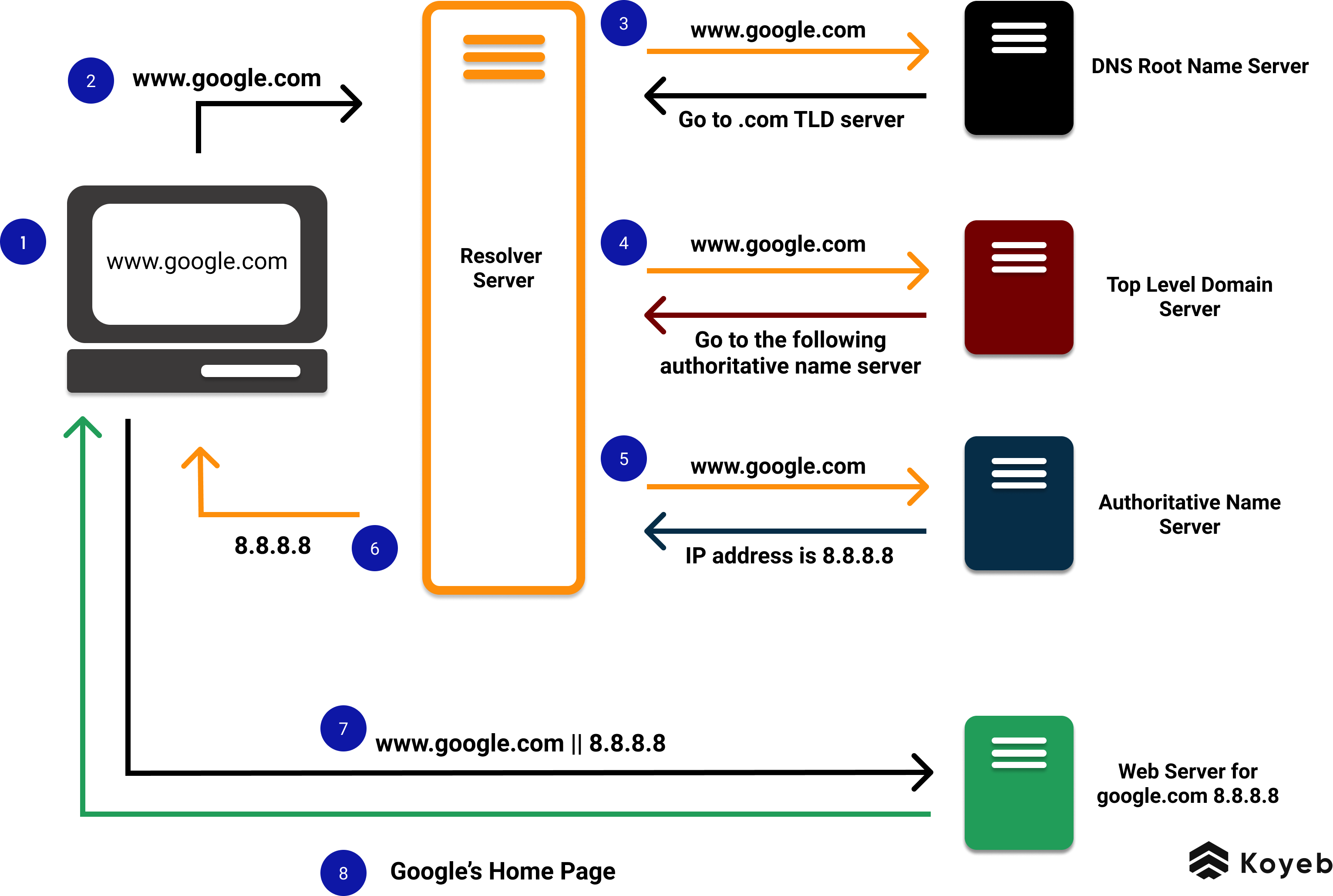

When a client service requests the location of another service, the client machine sends a request to a recursive resolver, the middleman server between the client and the DNS network. The recursive resolver is in charge of resolving the fully qualified domain name by speaking to various DNS servers and will automatically identify the IP address of the other service in one of two ways:

- If the resolver never received a request for this service, the recursive resolver sends the request to a root name server (google.com), which returns the appropriate Top-Level Domain (TLD) server (in this example, .com). Then the TLD server returns with the location of the appropriate authoritative name server. Finally, the authoritative name server returns the IP address to the DNS resolver, which in turn returns it to the client machine. The answer will also be cached (stored in local memory) during a period called the TTL (Time to Live) to increase performance for subsequent requests.

- All subsequent requests for the same domain will be answered using the cache and the recursive resolver will return the IP address to the client immediately.

The diagram below displays a typical DNS query. Despite the number of requests and responses involved, this process can often take less than tenths of a second.

Thanks to cached IP addresses, domain requests are often resolved quickly by the resolver server. This cached reserve also explains in part why DNS scales so well, as well as why DNS does not work as an out-of-the-box solution for general service discovery.

Communication in a Microservice Architecture

Typical ways to perform synchronous communication between microservices is to use HTTP or gRPC protocols. The issue that arises with microservice communication is ensuring that a microservice will know which API URL to call.

Hard coding URLs is not a viable way to enable microservice communication

One solution to this obstacle could be to hard code the URLs into the microservice, but this approach quickly runs into three problems:

- Changes require code updates. Not only is that annoying, but it is also time-consuming and, depending on the size of your application, very challenging.

- The cloud is full of dynamic URLs. If you deploy your app to the cloud, your cloud service provider will produce unique URLs that are unpredictable.

- Multiple environments mean multiple URLs. URLs will vary between local, staging, and production environments. Hard cording URLs is not a flexible enough solution to work across the multiple environments your deployment will pass through.

Given all of these issues, a more viable solution to microservice communication is necessary.

Service Discovery provides a dynamic microservice communication solution

Service discovery is the essential component in a microservice architecture that makes dynamic communication between microservices possible. It is the process that automatically detects, registers, and shares the locations of services in a network.

In practice, a lot of general service discovery is local DNS with no caching. With service discovery, a central server, also known as a service registry, is responsible for registering all of the URL addresses and port locations of the services that exist in and interact with your app. Client servers can interact with this central server to locate the addresses of other services.

This central server is a layer of abstraction that is flexible enough to handle dynamic URLs and automatically respond to code changes.

Health Checks and Deregistering Old Services

In addition to helping services locate one another, this communication solution provides a way to perform vital health checks that verify your services and systems are up and running. Also, if a service becomes obsolete and goes offline, it can be deregistered via service discovery.

Client-Side versus Service-Side Service Discovery

There are two models for service discovery: client-side and service-side discovery.

The key difference between these two models of service discovery is understanding whose responsibility it is to locate the service.

- With client-side service discovery, the responsibility lies with the client server whereas with server-side service discovery it is the server-side's responsibility to manage service discovery.

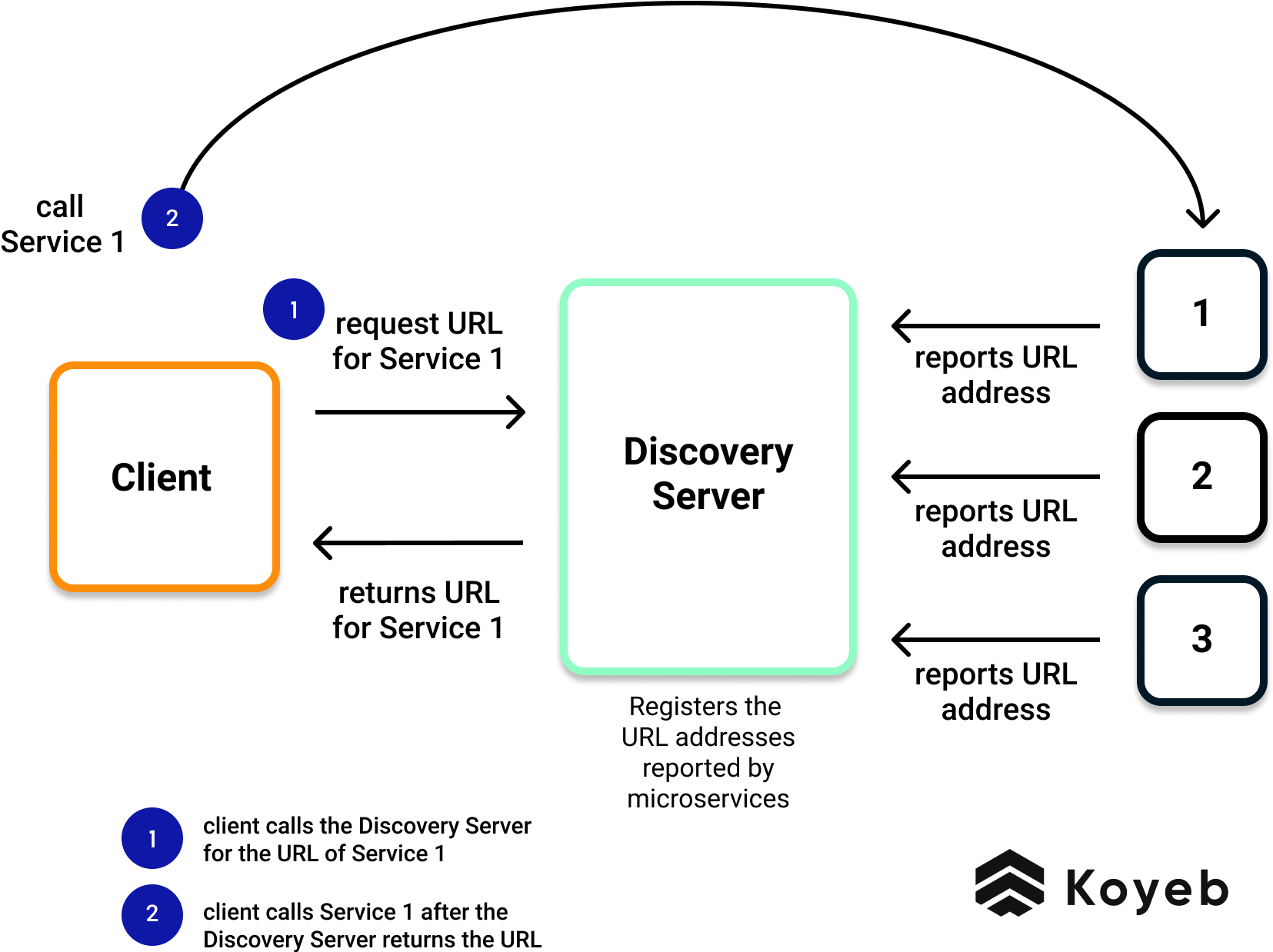

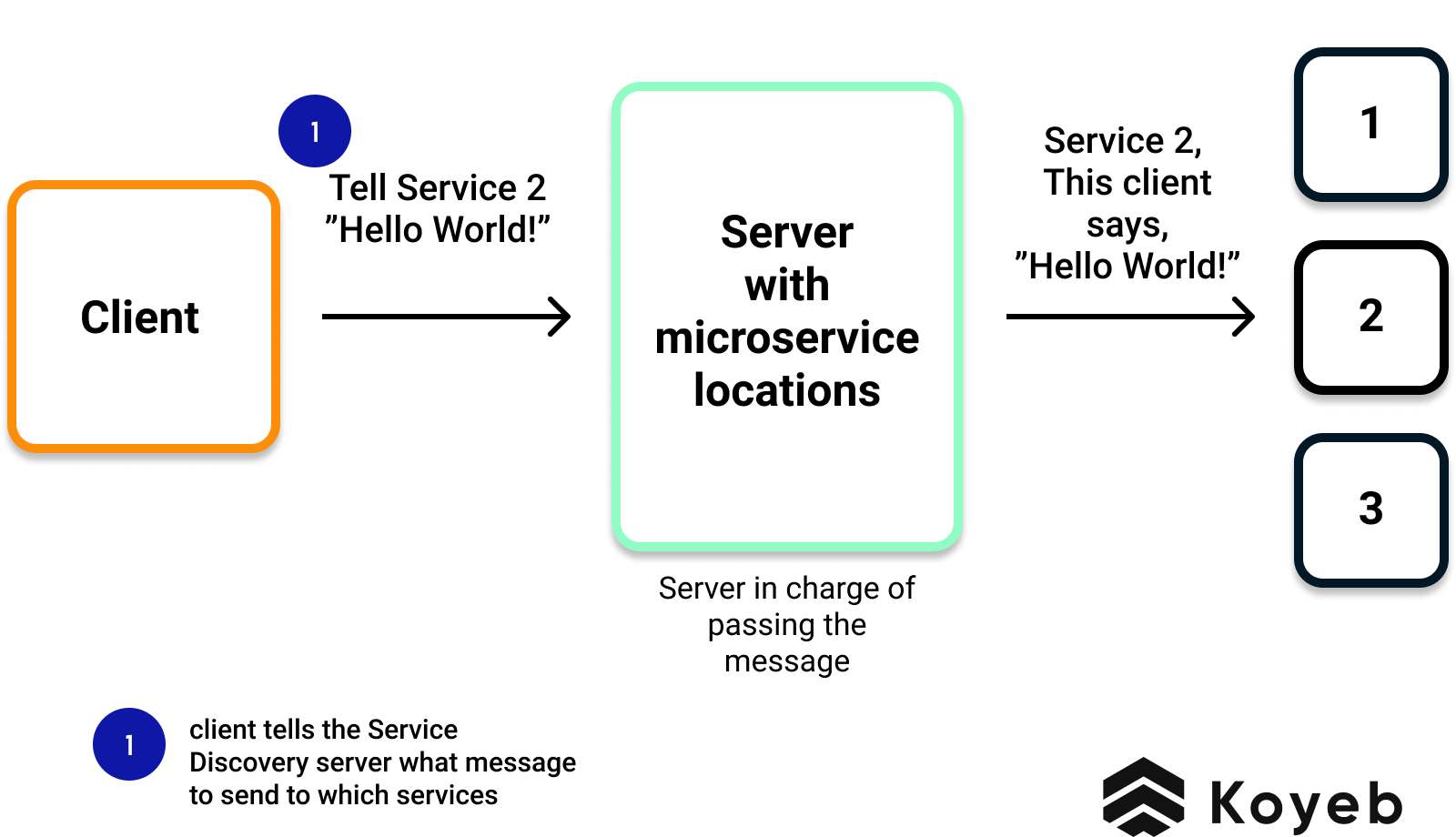

As mentioned above, service discovery requires a layer of abstraction between the decoupled services. Below are two diagrams that display how this central server functions to register and locate services in a microservice architecture.

Client-Side Service Discovery

In client-side service discovery, the client server has the responsibility to identify and call the desired service. It does this by first making a call to a Discovery Server, which is the central server that provides a layer of abstraction and acts as the phone book, registering all the locations of the different services.

Drawback: The drawback of this model is that the client has to make two separate calls in order to reach and communicate with the targeted microservice.

Advantage: The advantage of client-side service discovery is that the client application does not have to traffic through a router or a load balancer and therefore can avoid that extra hop.

Example of Client-Side Service Discovery: DNS, Eureka and Ribbon by Netflix.

Server-Side Service Discovery

In server-side service discovery, the server-side server has the responsibility to receive and pass along the client server's message. The central server maintains a registry of service locations and instead of returning the location to the client, it assumes the responsibility of locating the client's desired service and passing along the client's message.

Drawback: Depending on the service provider, a potential drawback of server-side discovery is the need to implement a load-balancing mechanism for the central server.

Advantage: The advantage of server-side service discovery is that the client server only has to make one call, making the client's application lighter since it does not have to deal with the lookup procedure or making an additional request for services.

Examples of Server-Side Discovery: NGINX Plus and NGINX.

Serverless Microservice Architecture with Koyeb

Serverless platforms are an advantageous way to host a microservice architecture. In addition to the flexibility and autoscaling, the Koyeb serverless platform natively provides server-side service discovery alongside its built-in service mesh.

Koyeb is a developer-friendly serverless platform to deploy apps globally. With native support of popular languages and built-in Docker container deployment, you can use Koyeb's serverless platform to deploy your Web apps, APIs, Event-driven functions, Background workers, and Cron jobs.

Since Koyeb is a unified platform that lets you combine languages, frameworks, and technologies you love, you can easily scale and run low-latency, responsive, web services and event-driven serverless functions.

Here are some useful resources to get you started:

- Koyeb Documentation: Learn everything you need to know about using Koyeb.

- Koyeb Tutorials: Discover guides and tutorials on common Koyeb use cases and get inspired to create your own!

- Koyeb Community: Join the community chat to stay in the loop about our latest feature announcements, exchange ideas with other developers, and ask our engineering teams whatever questions you may have about going serverless.